Moon Mappers citizen science project now public, and statistics show it works!

Written by

Emily Lakdawalla

March 29, 2012

Last week, Pamela Gay of CosmoQuest announced that their Moon Mappers citizen science project is out of its beta phase and ready for prime time. Moon Mappers enlists the help of the public to perform the gargantuan task of mapping the sizes and positions of craters photographed on the Moon by Lunar Reconnaissance Orbiter. Crater counting is the most powerful tool geologists have for figuring out how old planetary surfaces are. But when you have Terabytes of data, it's simply impossible for one scientist to count all the craters, even with the help of several work drones graduate students.

It sounds like a great idea (at least to those hapless graduate students) to enlist the aid of enthusiastic public workers in this task. On the other hand, it's also reasonable for scientists to be skeptical -- or at least concerned -- about the quality of the statistical data being gathered by those members of the public. Can the unwashed masses really perform crater counting well enough for the data set that they build to be used as the basis for Real Science?

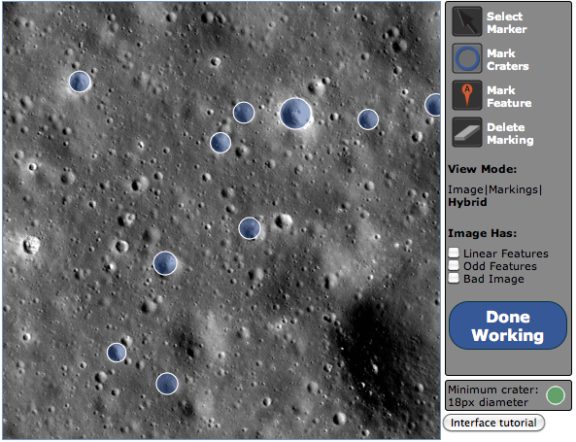

With data acquired through the beta period, Moon Mappers has established that a large group of volunteer crater counters does, in fact, produce a high-quality data set. Here's how they did that. They selected two Lunar Reconnaissance Orbiter images of the Moon, one from the Apollo 15 landing site region and one from the "mafic mound" region in the south pole-Aitken basin. Postdoctoral researcher Stuart Robbins mapped the craters on both regions. Then the public was invited to map the craters in the same images using the Moon Mappers tool. The Moon Mappers interface splits the huge images into much smaller pieces, roughly 500 pixels square, and provides users with tools to draw circles on top of the images wherever there are craters, limiting users to identifying craters 18 pixels across and larger. (They do this at a number of different resolutions, allowing users to map craters at all diameters within the image.) This combination of image size and crater size means that users usually need to spend only thirty seconds on any given image, a pace that seems to keep users from feeling that the work of poring over images is too tedious.

A total of 210 users responded to the call. During the beta period, each small piece of the two big photos was mapped by three to seven users. The resulting maps of craters matched very well with Stuart's, with 94% of the craters mapped by Stuart being identified by the users. Stuart estimates that professional workers' maps of craters may differ by up to 10%, so this match is remarkably good -- in terms of the craters that Stuart mapped. And it is far superior to the 74% match produced by a computer program designed to map craters automatically.

Since Moon Mappers users have to create an identity and log in each time they work, users' productivity and performance can be tracked on an individual basis. Pamela showed that there was good correlation between the number of craters mapped by an individual and the accuracy of their maps (compared to Stuart's). The statistics were dominated by two users who went on mapping sprees, spending (in one case) an entire weekend drawing little circles on lunar images. These two users, fortunately, were also among the most accurate.

While the match to Stuart's map of craters was very good, there was a false positive rate of 17%, meaning that 17% of the craters identified by the public were not identified by Stuart. Pamela told me that there are likely three main reasons for these false positives, one of which can be addressed immediately, one of which will be addressed with better statistics (more users), and one of which will be addressed with a subsequent Moon Mappers project.

The first source of false positives has to do with the size cutoff of 18 pixels. When users click to identify a crater and then find that the crater is smaller than the size cutoff, rather than delete the circle, they usually leave the 18-pixel circle on the map because "they feel morally obligated to mark it," in Pamela's terms. I can totally relate to this -- it's a crater, and I can see it, so it should be mapped, right? What that means is that there is a spike in the Moon Mappers database of craters with 18-pixel diameters, which do not exist in Stuart's map. An obvious and easy way to fix this is simply to allow users to map craters down to 18 pixels across, but throw out all crater measurements smaller than 20 pixels in diameter.

The second source of false positives has to do with how the algorithm matches craters from one user's map to another's. If two users map the same crater but they did so with too much mismatch in position or diameter, the algorithm won't be able to determine that they are the same crater, and will call them two different craters instead. With more users doing mapping, Moon Mappers will be able to throw out any craters identified by a relatively small number of users, keeping only those that most users agree upon, reducing these false positives (and also improving the quality of the final map).

The third source of false positives has to do with degraded craters. Fresh craters have nice crisp edges that are easy to map. But with time, lunar craters' rims are worn down into smooth, rounded humps whose exact edges are difficult to determine. When I tried out the Moon Mappers interface, this was my biggest problem -- I couldn't figure out how degraded a crater was too degraded to map, and it was also hard to figure out where to map its rim. When he made his map, Stuart no doubt had a very specific picture in his head of the most degraded crater he was willing to map, and ignored all the worse ones. Members of the public, when they stare at lunar images, will have different pictures in their heads, and tend to err on the side of mapping more craters, doing their best to give each crater its due. These degraded craters are probably the biggest source of mismatch between different professional workers' maps of the same region, and this is the hardest one to address.

Moon Mappers won't really be able to deal with the degraded-crater problem, but a followup project will. Pamela told me that Cosmoquest has been exploring ways to make citizen science projects that can work as sidebar widgets or mobile apps. One promising possibility is an app that presents users with a photo of a single crater (one mapped in the Moon Mappers project by many users, maybe 6 to 10 users). The photo will be accompanied by photos of perhaps four "type" craters of different degradation states, ranging (in Pamela's words) "from 'pristine' to 'is there something there?'" The user will be asked to tap the type image whose condition most closely matches the condition of the test crater. To me, this sounds like a terrific "waiting in line" app, and it's also one that my five-year-old could actually handle. ("Same" and "different" comparisons are a major component of the kindergarten curriculum.)

The question of accuracy of citizen scientists is the biggest concern that professionals have when presented with the opportunity of using volunteer citizen science labor for a research project, and it's a reasonable concern to have. With these statistics from the beta period of Moon Mappers, I think that the Cosmoquest team has done an excellent job of showing that a well-designed project can, in fact, produce data that is reliable enough to be used for professional research projects. I hope to see more professionals unloading some of their data gathering tasks onto citizen scientists in the future! When these projects work, everyone benefits: scientists amass data sets that they could have no hope of producing themselves, and members of the public make small but real contributions to the production of science from space missions.

These results were presented at a press briefing and a poster presentation at the Lunar and Planetary Science Conference last week.

Explore Worlds

Explore Worlds Find Life

Find Life Defend Earth

Defend Earth