Steve Albers • Apr 20, 2016

Synthesizing DSCOVR-like Images Using Atmospheric and Geophysical Data

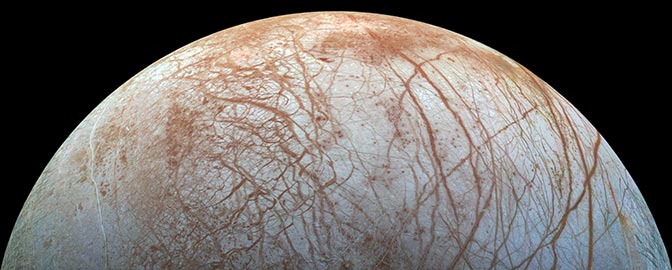

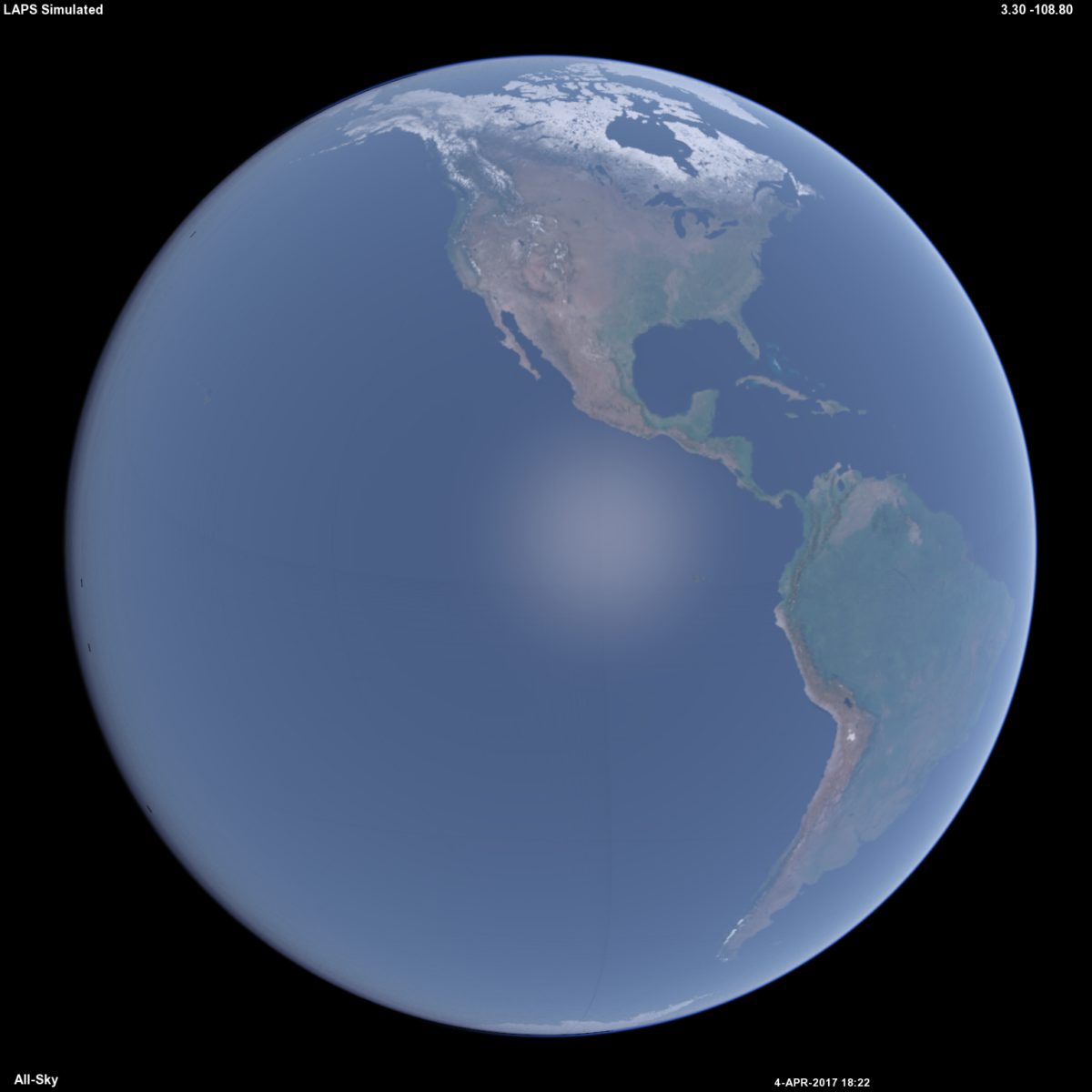

How inspiring it is to have now the EPIC camera imagery available on a regular basis from DSCOVR! There is so much to see in it—literally the whole world. Why does our planet look the way it does? How does light interacting with land, clouds, water, snow, ice, gases, and various aerosols all come together? One way to learn the answer is to try and synthesize the DSCOVR view from various "building blocks" of geophysical and atmospheric data. This is what I've been working on recently. The techniques used in the software were first developed to work at ground level, matching simulated sky imagery with all-sky camera (e.g. above) photos. After getting decent results I then wanted to look from higher and higher vantage points, first from mountain tops, then up to the stratosphere. Next was up to low earth orbit, geosynchronous orbit, and now up to lunar distance where the geometrical perspective is just about the same as what we see from DSCOVR.

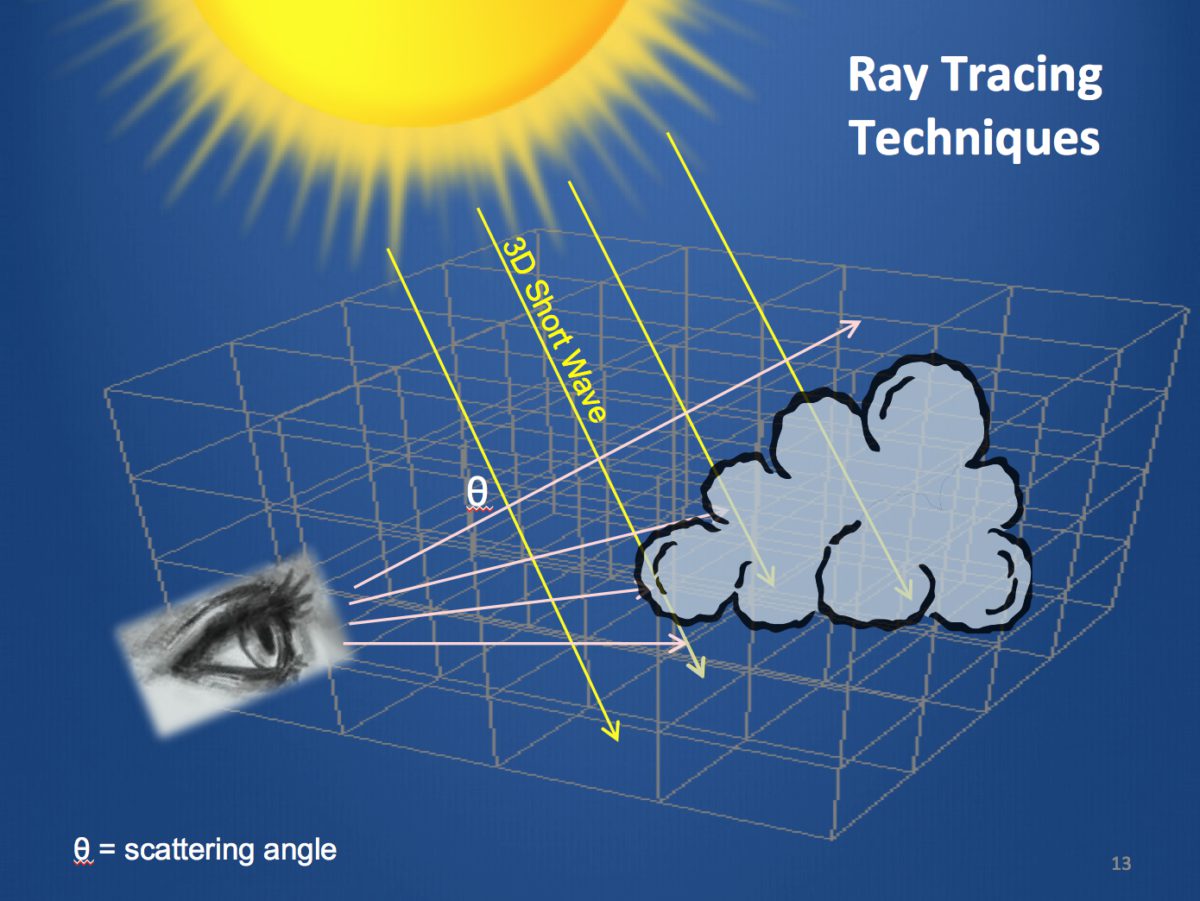

I'm employing some fairly commonly used ray tracing (image rendering) techniques, with an emphasis on including the physical processes of visible radiation in the Earth's atmosphere and on the surface. Light rays from the sun and their associated photons can be considered to travel in straight lines, unless they are reflected or refracted. When encountering a cloud or the land surface, the bouncing around of the light is called scattering. The angular pattern of scattering depends in interesting ways on the type of cloud or land surface, as well as the wavelength (color) of light.

Many or most light rays have a single scattering event, and this is the easiest situation to calculate. The scattering occurs in partially random directions according to known phase functions (angular scattering probability distribution). However, multiple scattering can get rather complicated as that can happen with several bounces in the atmosphere, and even hundreds of times in a thick cloud. I've been working on ways to approximate multiple scattering as an equivalent single scattering event. This (I hope) will provide a close enough result for many applications. There is another approach known as the Monte Carlo method that I may try eventually. Here we explicitly let individual photons bounce around in the scene, scattering in partially random directions according to the known phase functions. This is more computer intensive and even then some approximations are needed.

So what data do we need to run this simulation? NASA's Next Generation Blue Marble imagery provides high resolution color land surface information that can be approximately converted into spectral (color dependent) albedo. There are monthly versions of this to account for seasonal vegetation changes. Over water, an assumption is made about ocean waves having an average tilt of 11 degrees to help define the extent of the sun glint. This can be refined later by using a wave model that uses surface wind information. Steeper waves will enlarge the glint region. The glint can change shape if it is offset from the Earth's center, or if there is a variable wave field. It can even change color to be more orange, particularly if the glint is near the limb—something that wouldn't happen with DSCOVR's imagery. Key atmospheric gases are included such as nitrogen, oxygen, and ozone. The first two produce Rayleigh scattering and are mainly responsible for the blue sky. We see this blue sky both from the ground and from space. In fact the light we see over the oceans (outside of sun glint areas) is mostly atmospheric scattering and is the main reason the Blue Marble is blue. This blue cast is rather ubiquitous over both land and ocean and gradually increases in brightness as we approach the limb.

Ozone can produce a significant coloring effect when light travels at glancing angles through the stratosphere. Rather than scattering more blue light (like nitrogen and oxygen), ozone simply absorbs light. We might be more familiar with the ultraviolet absorption that protects us from things like sunburn. However, there's also a lesser absorption in green and red light from the so-called Chappuis bands. Thus there's a blue window in its spectrum. From space a rising or setting sun can appear red when right near the Earth's limb (shining through the troposphere), though it can actually reverse color to be slightly blue when shining at a grazing angle through the ozone rich stratosphere. We can see a similar effect from the ground as a bluish edge to the Earth's shadow during a lunar eclipse. Ozone's coloring effects are almost imperceptible when looking up at the sky at midday, while during early twilight we see a blue overhead sky just as much from ozone absorption versus Rayleigh scattering. Thus, there can be more to the familiar question and answer to "Why is the sky blue?".

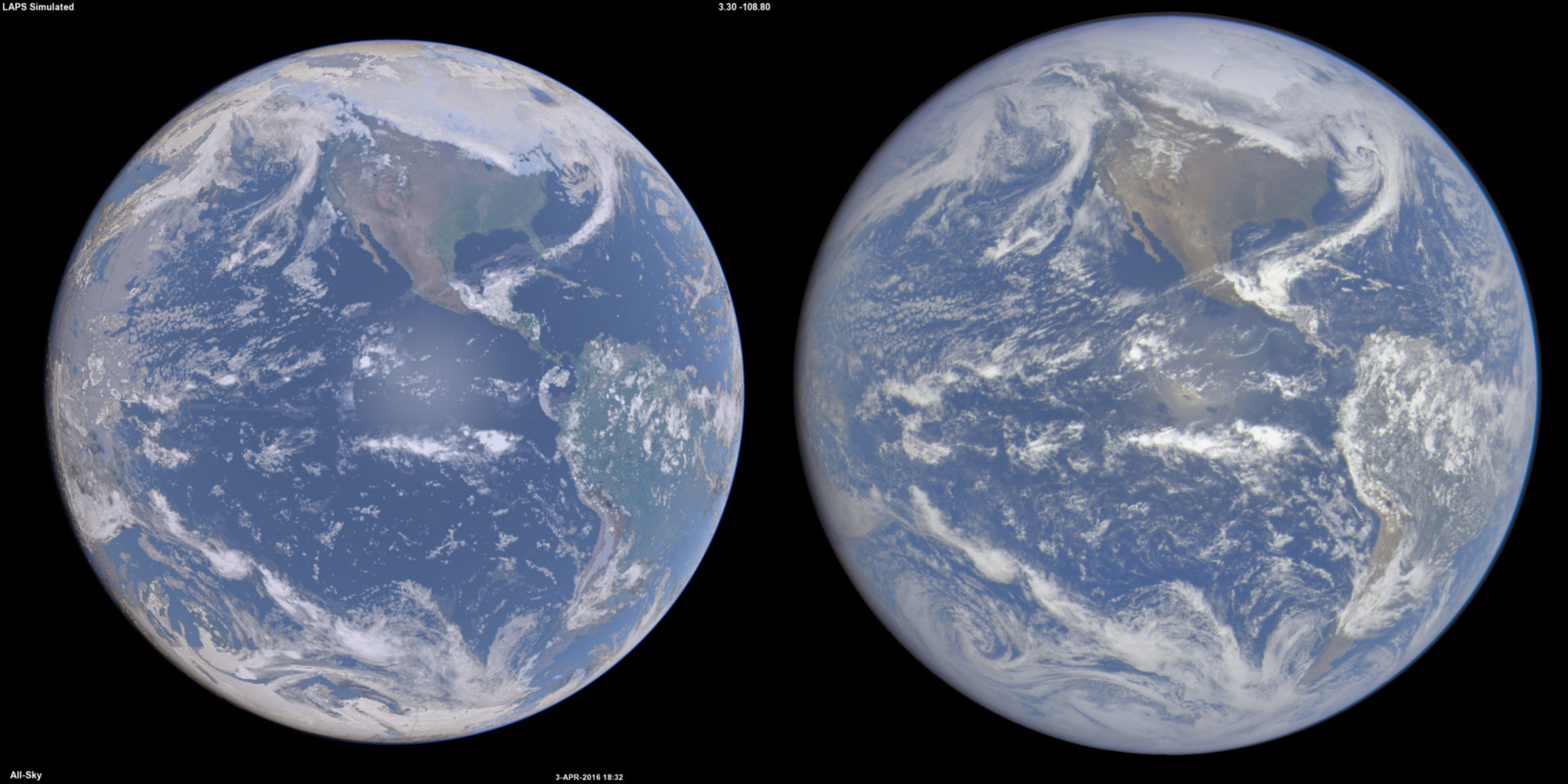

Clouds are of course a big part of the simulations and 3-D fields quantifying the density and size distributions of the constituent hydrometeors (cloud liquid, cloud ice, etc.) can be obtained from a Numerical Weather Prediction (NWP) model. In NWP, data assimilation procedures are used to combine weather satellite imagery, measured quasi-vertical profiles of temperature and humidity, surface and other observations and prior forecasts into 3-D “analyses” to describe the state of the atmosphere including clouds at any given time. In this case I'm using a cloud analysis from the Local Analysis and Prediction System (LAPS) that matches the time of the DSCOVR image. This system was selected because its cloud analysis is designed to closely match the locations of the actual clouds, particularly in the daylight hemisphere. The NWP analyses are also used to initialize NWP forecast models that project the initial state (including the clouds) into the future.

One aspect of evaluating the synthetic vs actual image match is whether the clouds lie in the correct locations. Another is whether they have the correct brightness, a sensitive indicator of the model's hydrometeors and the associated radiative transfer. All else being equal, optically thicker clouds are generally brighter as seen from above with more light scattered back toward space. This is the opposite of what we see when we're underneath a cloud. The cloud brightness or reflectance changes noticeably over a wide range of cloud thickness, allowing optical depth (tau) values fromSimulated Weather Imagery (SWIM) page including a link to a powerpoint presentation based on a recent seminar.

Aerosols are here defined as particles other than clouds such as haze, dust, or smoke particles. The ways to define aerosols are talked about in this interesting blog post. They are an important consideration and scatter light mostly in a forward direction. They can be characterized by optical thickness (a measure of opacity), and scale height (indicating vertical distribution). The sizes of the aerosols are typically in a bimodal distribution, where the larger dust particles scatter more light more tightly forward and smaller ones with a broader angular distribution. Both aerosols and ozone help to soften the appearance of the Earth near the limb in the DSCOVR images, providing a sensitive test of the simulation. At present some general average values are used for aerosols and ozone. This can be improved by using a more detailed geographic and seasonal climatology. There are also plans to utilize real-time aerosols and ozone (along with clouds) from NOAA's FIM model.

The ray-traced light intensities can be converted into the physical units of spectral radiance by factoring in the solar spectrum. To make a digital RGB color image I took these steps to calculate what the image count values should be:

1) Convolve the spectral radiance with the so-called tristimulus color functions to take into account color perception. A good example illustrating the benefits of this is in how the blue sky looks. The violet component of the light is beyond what the blue phosphor can show, so a little bit of red light is mixed in. This is analogous to what our eye-brain combination will do (for those with typical color vision). We thus perceive spectrally pure violet light in a manner similar to purple (a mix of blue with a little red).

2) Apply the 3x3 transfer matrix that puts the XYZ image into the RGB color space of the computer monitor. This is needed in part because the colors of the monitor aren't spectrally pure. I'm making the assumption that the sun is a pure white color (as it is very nearly when seen from space). Correspondingly I like to set my computer monitor color temperature to 5800K, a value nearly equal to the sun's surface temperature.

3) Include a gamma correction to match the non-linear monitor brightness scaling. This is important if we want the displayed image brightness to be directly proportional to the actual brightness of the scene. Background information about these three steps can be found here with an example for Rayleigh scattering.

While this is all a work in progress, I believe this should give a realistic color and brightness match if you're sitting (floating) out in space holding your computer monitor side-by-side with the Earth. This has somewhat more subtle colors and contrasts compared with many Earth images that we see. The intent here is to make the displayed image have a proportional brightness to the actual scene without any exaggeration in the color saturation (that is common even in everyday photography). The presence of the atmosphere, more fully considered in the rendering helps soften the appearance of the underlying landscape.

I took the liberty of doing some tweaking of the DSCOVR color balance to help get a better match to the synthetic imagery, mainly based on the blue ocean/sky color. The DSCOVR image contrast was reduced since there is apparently some saturation at the bright and dark ends. This is conveniently noticeable during the March solar eclipse sequence where we have a handy gradiation of light intensities to examine. The moon could provide another calibration target during transits. The raw DSCOVR data could be used to help with producing more visually realistic images, borrowing from some of the same techniques described above. Meanwhile, maybe an astronaut can try these comparisons out sometime? Going forward there's much here to learn about all the aspects of Earth modeling and raytracing improvements to really demonstrate that we can model our planet in a wholistic and accurate manner.

Other Phases

The DSCOVR satellite is positioned to always view the fully lit side of the Earth. The simulation package can also show us what DSCOVR would theoretically see if it could magically observe from other phase angles.

Above is a simulated crescent Earth view from the same distance as DSCOVR. Compare with an actual image from the Rosetta spacecraft as it flew by Earth. Here is an animated version showing various phases that can be compared with this sequence from the Himawari geosynchronous satellite.

Let’s Go Beyond The Horizon

Every success in space exploration is the result of the community of space enthusiasts, like you, who believe it is important. You can help usher in the next great era of space exploration with your gift today.

Donate Today

Explore Worlds

Explore Worlds Find Life

Find Life Defend Earth

Defend Earth